|

Reference documentation for deal.II version 9.0.0

|

|

Reference documentation for deal.II version 9.0.0

|

#include <deal.II/base/partitioner.h>

Public Member Functions | |

| Partitioner () | |

| Partitioner (const unsigned int size) | |

| Partitioner (const IndexSet &locally_owned_indices, const IndexSet &ghost_indices_in, const MPI_Comm communicator_in) | |

| Partitioner (const IndexSet &locally_owned_indices, const MPI_Comm communicator_in) | |

| virtual void | reinit (const IndexSet &vector_space_vector_index_set, const IndexSet &read_write_vector_index_set, const MPI_Comm &communicator) |

| void | set_owned_indices (const IndexSet &locally_owned_indices) |

| void | set_ghost_indices (const IndexSet &ghost_indices, const IndexSet &larger_ghost_index_set=IndexSet()) |

| types::global_dof_index | size () const |

| unsigned int | local_size () const |

| const IndexSet & | locally_owned_range () const |

| std::pair< types::global_dof_index, types::global_dof_index > | local_range () const |

| bool | in_local_range (const types::global_dof_index global_index) const |

| unsigned int | global_to_local (const types::global_dof_index global_index) const |

| types::global_dof_index | local_to_global (const unsigned int local_index) const |

| bool | is_ghost_entry (const types::global_dof_index global_index) const |

| const IndexSet & | ghost_indices () const |

| unsigned int | n_ghost_indices () const |

| const std::vector< std::pair< unsigned int, unsigned int > > & | ghost_indices_within_larger_ghost_set () const |

| const std::vector< std::pair< unsigned int, unsigned int > > & | ghost_targets () const |

| const std::vector< std::pair< unsigned int, unsigned int > > & | import_indices () const |

| unsigned int | n_import_indices () const |

| const std::vector< std::pair< unsigned int, unsigned int > > & | import_targets () const |

| bool | is_compatible (const Partitioner &part) const |

| bool | is_globally_compatible (const Partitioner &part) const |

| unsigned int | this_mpi_process () const |

| unsigned int | n_mpi_processes () const |

| const MPI_Comm & | get_communicator () const |

| virtual const MPI_Comm & | get_mpi_communicator () const |

| bool | ghost_indices_initialized () const |

| template<typename Number > | |

| void | export_to_ghosted_array_start (const unsigned int communication_channel, const ArrayView< const Number > &locally_owned_array, const ArrayView< Number > &temporary_storage, const ArrayView< Number > &ghost_array, std::vector< MPI_Request > &requests) const |

| template<typename Number > | |

| void | export_to_ghosted_array_finish (const ArrayView< Number > &ghost_array, std::vector< MPI_Request > &requests) const |

| template<typename Number > | |

| void | import_from_ghosted_array_start (const VectorOperation::values vector_operation, const unsigned int communication_channel, const ArrayView< Number > &ghost_array, const ArrayView< Number > &temporary_storage, std::vector< MPI_Request > &requests) const |

| template<typename Number > | |

| void | import_from_ghosted_array_finish (const VectorOperation::values vector_operation, const ArrayView< const Number > &temporary_storage, const ArrayView< Number > &locally_owned_storage, const ArrayView< Number > &ghost_array, std::vector< MPI_Request > &requests) const |

| std::size_t | memory_consumption () const |

Public Member Functions inherited from LinearAlgebra::CommunicationPatternBase Public Member Functions inherited from LinearAlgebra::CommunicationPatternBase | |

| virtual | ~CommunicationPatternBase ()=default |

Static Public Member Functions | |

| static ::ExceptionBase & | ExcIndexNotPresent (types::global_dof_index arg1, unsigned int arg2) |

| static ::ExceptionBase & | ExcGhostIndexArrayHasWrongSize (unsigned int arg1, unsigned int arg2, unsigned int arg3) |

Private Attributes | |

| types::global_dof_index | global_size |

| IndexSet | locally_owned_range_data |

| std::pair< types::global_dof_index, types::global_dof_index > | local_range_data |

| IndexSet | ghost_indices_data |

| unsigned int | n_ghost_indices_data |

| std::vector< std::pair< unsigned int, unsigned int > > | ghost_targets_data |

| std::vector< std::pair< unsigned int, unsigned int > > | import_indices_data |

| unsigned int | n_import_indices_data |

| std::vector< std::pair< unsigned int, unsigned int > > | import_targets_data |

| std::vector< unsigned int > | import_indices_chunks_by_rank_data |

| unsigned int | n_ghost_indices_in_larger_set |

| std::vector< unsigned int > | ghost_indices_subset_chunks_by_rank_data |

| std::vector< std::pair< unsigned int, unsigned int > > | ghost_indices_subset_data |

| unsigned int | my_pid |

| unsigned int | n_procs |

| MPI_Comm | communicator |

| bool | have_ghost_indices |

This class defines a model for the partitioning of a vector (or, in fact, any linear data structure) among processors using MPI.

The partitioner stores the global vector size and the locally owned range as a half-open interval [lower, upper). Furthermore, it includes a structure for the point-to-point communication patterns. It allows the inclusion of ghost indices (i.e. indices that a current processor needs to have access to, but are owned by another process) through an IndexSet. In addition, it also stores the other processors' ghost indices belonging to the current processor (see import_targets()), which are the indices where other processors might require information from. In a sense, these import indices form the dual of the ghost indices. This information is gathered once when constructing the partitioner, which obviates subsequent global communication steps when exchanging data.

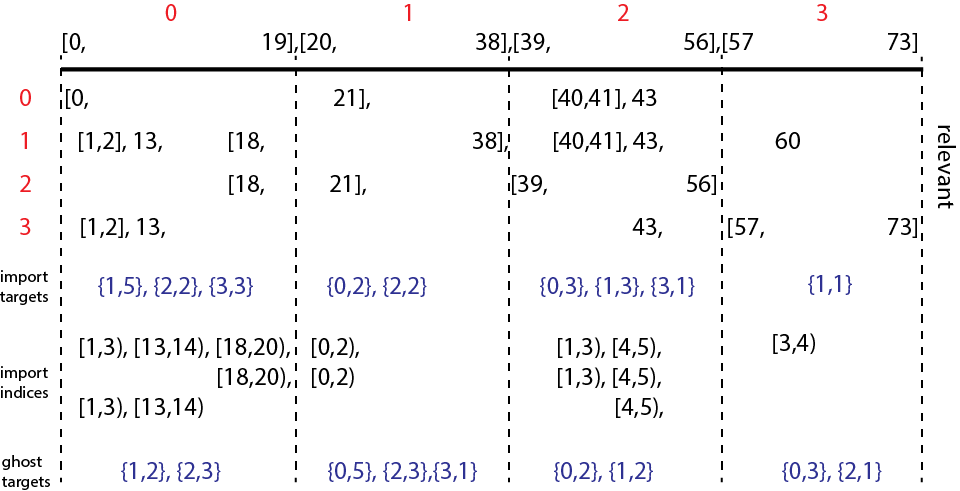

The figure below gives an example of index space [0,74) being split into four processes.

Here process 0 will import 5 DoFs from process 1 (first pair of import targets), which corresponds to the first 3 elements of its import indices. Whereas process 2 will import 3 DoFs from process 0, which corresponds to the first two elements of its import indices.

The partitioner includes a mechanism for converting global to local and local to global indices. Internally, this class stores vector elements using the convention as follows: The local range is associated with local indices [0,local_size), and ghost indices are stored consecutively in [local_size, local_size + n_ghost_indices). The ghost indices are sorted according to their global index.

This class handles the main ghost data exchange of LinearAlgebra::distributed::Vector through four functions:

The MPI communication routines are point-to-point communication patterns.

This partitioner class operates on a fixed set of ghost indices and must always be compatible with the ghost indices inside a vector. In some cases, one only wants to send some of the ghost indices present in a vector around, but without creating a copy of the vector with a suitable index set - think e.g. of local time stepping where different regions of a vector might be exchanged at different stages of a time step slice. This class supports that case by the following model: A vector is first created with the full ghosted index set. Then, a second Partitioner instance is created that sets ghost indices with a tighter index set as ghosts, but specifying the larger index set as the second argument to the set_ghost_indices() call. When data is exchanged, the export_to_ghosted_array_start() and import_from_ghosted_array_start() detect this case and only send the selected indices, taken from the full array of ghost entries.

Definition at line 124 of file partitioner.h.

| Utilities::MPI::Partitioner::Partitioner | ( | ) |

Empty Constructor.

Definition at line 25 of file partitioner.cc.

| Utilities::MPI::Partitioner::Partitioner | ( | const unsigned int | size | ) |

Constructor with size argument. Creates an MPI_COMM_SELF structure where there is no real parallel layout.

Definition at line 41 of file partitioner.cc.

| Utilities::MPI::Partitioner::Partitioner | ( | const IndexSet & | locally_owned_indices, |

| const IndexSet & | ghost_indices_in, | ||

| const MPI_Comm | communicator_in | ||

| ) |

Constructor with index set arguments. This constructor creates a distributed layout based on a given communicators, an IndexSet describing the locally owned range and another one for describing ghost indices that are owned by other processors, but we need to have read or write access to.

Definition at line 61 of file partitioner.cc.

| Utilities::MPI::Partitioner::Partitioner | ( | const IndexSet & | locally_owned_indices, |

| const MPI_Comm | communicator_in | ||

| ) |

Constructor with one index set argument. This constructor creates a distributed layout based on a given communicator, and an IndexSet describing the locally owned range. It allows to set the ghost indices at a later time. Apart from this, it is similar to the other constructor with two index sets.

Definition at line 80 of file partitioner.cc.

|

virtual |

Reinitialize the communication pattern. The first argument vector_space_vector_index_set is the index set associated to a VectorSpaceVector object. The second argument read_write_vector_index_set is the index set associated to a ReadWriteVector object.

Implements LinearAlgebra::CommunicationPatternBase.

Definition at line 98 of file partitioner.cc.

| void Utilities::MPI::Partitioner::set_owned_indices | ( | const IndexSet & | locally_owned_indices | ) |

Set the locally owned indices. Used in the constructor.

Definition at line 111 of file partitioner.cc.

| void Utilities::MPI::Partitioner::set_ghost_indices | ( | const IndexSet & | ghost_indices, |

| const IndexSet & | larger_ghost_index_set = IndexSet() |

||

| ) |

Set the ghost indices after the constructor has been called.

The optional parameter larger_ghost_index_set allows defining an indirect addressing into a larger set of ghost indices. This setup is useful if a distributed vector is based on that larger ghost index set but only a tighter subset should be communicated according to ghost_indices.

Definition at line 147 of file partitioner.cc.

| types::global_dof_index Utilities::MPI::Partitioner::size | ( | ) | const |

Return the global size.

| unsigned int Utilities::MPI::Partitioner::local_size | ( | ) | const |

Return the local size, i.e. local_range().second minus local_range().first.

| const IndexSet& Utilities::MPI::Partitioner::locally_owned_range | ( | ) | const |

Return an IndexSet representation of the local range. This class only supports contiguous local ranges, so the IndexSet actually only consists of one single range of data, and is equivalent to the result of local_range().

| std::pair<types::global_dof_index,types::global_dof_index> Utilities::MPI::Partitioner::local_range | ( | ) | const |

Return the local range. The returned pair consists of the index of the first element and the index of the element one past the last locally owned one.

| bool Utilities::MPI::Partitioner::in_local_range | ( | const types::global_dof_index | global_index | ) | const |

Return true if the given global index is in the local range of this processor.

| unsigned int Utilities::MPI::Partitioner::global_to_local | ( | const types::global_dof_index | global_index | ) | const |

Return the local index corresponding to the given global index. If the given global index is neither locally owned nor a ghost, an exception is thrown.

Note that the local index for locally owned indices is between 0 and local_size()-1, and the local index for ghosts is between local_size() and local_size()+n_ghost_indices()-1.

| types::global_dof_index Utilities::MPI::Partitioner::local_to_global | ( | const unsigned int | local_index | ) | const |

Return the global index corresponding to the given local index.

Note that the local index for locally owned indices is between 0 and local_size()-1, and the local index for ghosts is between local_size() and local_size()+n_ghost_indices()-1.

| bool Utilities::MPI::Partitioner::is_ghost_entry | ( | const types::global_dof_index | global_index | ) | const |

Return whether the given global index is a ghost index on the present processor. Returns false for indices that are owned locally and for indices not present at all.

| const IndexSet& Utilities::MPI::Partitioner::ghost_indices | ( | ) | const |

Return an IndexSet representation of all ghost indices.

| unsigned int Utilities::MPI::Partitioner::n_ghost_indices | ( | ) | const |

Return the number of ghost indices. Same as ghost_indices().n_elements(), but cached for simpler access.

| const std::vector<std::pair<unsigned int, unsigned int> >& Utilities::MPI::Partitioner::ghost_indices_within_larger_ghost_set | ( | ) | const |

In case the partitioner was built to define ghost indices as a subset of indices in a larger set of ghosts, this function returns the numbering in terms of ranges within that set. Similar structure as in an IndexSet, but tailored to be iterated over.

In case the partitioner did not take a second set of ghost indices into account, this subset is simply defined as the half-open interval [0, n_ghost_indices()).

| const std::vector<std::pair<unsigned int, unsigned int> >& Utilities::MPI::Partitioner::ghost_targets | ( | ) | const |

Return a list of processors (first entry) and the number of ghost degrees of freedom owned by that processor (second entry). The sum of the latter over all processors equals n_ghost_indices().

| const std::vector<std::pair<unsigned int, unsigned int> >& Utilities::MPI::Partitioner::import_indices | ( | ) | const |

Return a vector of ranges of local indices that we are importing during compress(), i.e., others' ghosts that belong to the local range. Similar structure as in an IndexSet, but tailored to be iterated over, and some indices may be duplicated. The returned pairs consists of the index of the first element and the index of the element one past the last one in a range.

| unsigned int Utilities::MPI::Partitioner::n_import_indices | ( | ) | const |

Number of import indices, i.e., indices that are ghosts on other processors and we will receive data from.

| const std::vector<std::pair<unsigned int, unsigned int> >& Utilities::MPI::Partitioner::import_targets | ( | ) | const |

Return a list of processors (first entry) and the number of degrees of freedom imported from it during compress() operation (second entry) for all the processors that data is obtained from, i.e., locally owned indices that are ghosts on other processors.

| bool Utilities::MPI::Partitioner::is_compatible | ( | const Partitioner & | part | ) | const |

Check whether the given partitioner is compatible with the partitioner used for this vector. Two partitioners are compatible if they have the same local size and the same ghost indices. They do not necessarily need to be the same data field. This is a local operation only, i.e., if only some processors decide that the partitioning is not compatible, only these processors will return false, whereas the other processors will return true.

Definition at line 428 of file partitioner.cc.

| bool Utilities::MPI::Partitioner::is_globally_compatible | ( | const Partitioner & | part | ) | const |

Check whether the given partitioner is compatible with the partitioner used for this vector. Two partitioners are compatible if they have the same local size and the same ghost indices. They do not necessarily need to be the same data field. As opposed to is_compatible(), this method checks for compatibility among all processors and the method only returns true if the partitioner is the same on all processors.

This method performs global communication, so make sure to use it only in a context where all processors call it the same number of times.

Definition at line 454 of file partitioner.cc.

| unsigned int Utilities::MPI::Partitioner::this_mpi_process | ( | ) | const |

Return the MPI ID of the calling processor. Cached to have simple access.

| unsigned int Utilities::MPI::Partitioner::n_mpi_processes | ( | ) | const |

Return the total number of MPI processor participating in the given partitioner. Cached to have simple access.

| const MPI_Comm& Utilities::MPI::Partitioner::get_communicator | ( | ) | const |

Return the MPI communicator underlying the partitioner object.

|

virtual |

Return the MPI communicator underlying the partitioner object.

Implements LinearAlgebra::CommunicationPatternBase.

| bool Utilities::MPI::Partitioner::ghost_indices_initialized | ( | ) | const |

Return whether ghost indices have been explicitly added as a ghost_indices argument. Only true if a reinit call or constructor provided that argument.

| void Utilities::MPI::Partitioner::export_to_ghosted_array_start | ( | const unsigned int | communication_channel, |

| const ArrayView< const Number > & | locally_owned_array, | ||

| const ArrayView< Number > & | temporary_storage, | ||

| const ArrayView< Number > & | ghost_array, | ||

| std::vector< MPI_Request > & | requests | ||

| ) | const |

Start the exports of the data in a locally owned array to the range described by the ghost indices of this class.

| communication_channel | Sets an offset to the MPI_Isend and MPI_Irecv calls that avoids interference with other ongoing export_to_ghosted_array_start() calls on different entries. Typically handled within the blocks of a block vector. |

| locally_owned_array | The array of data from which the data is extracted and sent to the ghost entries on a remote processor. |

| temporary_storage | A temporary storage array of length n_import_indices() that is used to hold the packed data from the locally_owned_array to be sent. Note that this array must not be touched until the respective export_to_ghosted_array_finish() call has been made because the model uses non-blocking communication. |

| ghost_array | The array that will receive the exported data, i.e., the entries that a remote processor sent to the calling process. Its size must either be n_ghost_indices() or equal the number of ghost indices in the larger index set that was given as second argument to set_ghost_indices(). In case only selected indices are sent, no guarantee is made regarding the entries that do not get set. Some of them might be used to organize the transfer and later reset to zero, so make sure you do not use them in computations. |

| requests | The list of MPI requests for the ongoing non-blocking communication that will be finalized in the export_to_ghosted_array_finish() call. |

This functionality is used in LinearAlgebra::distributed::Vector::update_ghost_values().

| void Utilities::MPI::Partitioner::export_to_ghosted_array_finish | ( | const ArrayView< Number > & | ghost_array, |

| std::vector< MPI_Request > & | requests | ||

| ) | const |

Finish the exports of the data in a locally owned array to the range described by the ghost indices of this class.

| ghost_array | The array that will receive the exported data started in the export_to_ghosted_array_start(). This must be the same array as passed to that function, otherwise the behavior is undefined. |

| requests | The list of MPI requests for the ongoing non-blocking communication that were started in the export_to_ghosted_array_start() call. This must be the same array as passed to that function, otherwise MPI will likely throw an error. |

This functionality is used in LinearAlgebra::distributed::Vector::update_ghost_values().

| void Utilities::MPI::Partitioner::import_from_ghosted_array_start | ( | const VectorOperation::values | vector_operation, |

| const unsigned int | communication_channel, | ||

| const ArrayView< Number > & | ghost_array, | ||

| const ArrayView< Number > & | temporary_storage, | ||

| std::vector< MPI_Request > & | requests | ||

| ) | const |

Start importing the data on an array indexed by the ghost indices of this class that is later accumulated into a locally owned array with import_from_ghosted_array_finish().

| vector_operation | Defines how the data sent to the owner should be combined with the existing entries, e.g., added into. |

| communication_channel | Sets an offset to the MPI_Isend and MPI_Irecv calls that avoids interference with other ongoing import_from_ghosted_array_start() calls on different entries. Typically handled within the blocks of a block vector. |

| ghost_array | The array of ghost data that is sent to a remote owner of the respective index in a vector. Its size must either be n_ghost_indices() or equal the number of ghost indices in the larger index set that was given as second argument to set_ghost_indices(). This or the subsequent import_from_ghosted_array_finish() function, the order is implementation-dependent, will set all data entries behind ghost_array to zero. |

| temporary_storage | A temporary storage array of length n_import_indices() that is used to hold the packed data from MPI communication that will later be written into the locally owned array. Note that this array must not be touched until the respective import_from_ghosted_array_finish() call has been made because the model uses non-blocking communication. |

| requests | The list of MPI requests for the ongoing non-blocking communication that will be finalized in the export_to_ghosted_array_finish() call. |

This functionality is used in LinearAlgebra::distributed::Vector::compress().

| void Utilities::MPI::Partitioner::import_from_ghosted_array_finish | ( | const VectorOperation::values | vector_operation, |

| const ArrayView< const Number > & | temporary_storage, | ||

| const ArrayView< Number > & | locally_owned_storage, | ||

| const ArrayView< Number > & | ghost_array, | ||

| std::vector< MPI_Request > & | requests | ||

| ) | const |

Finish importing the data from an array indexed by the ghost indices of this class into a specified locally owned array, combining the results according to the given input vector_operation.

| vector_operation | Defines how the data sent to the owner should be combined with the existing entries, e.g., added into. |

| temporary_storage | The same array given to the import_from_ghosted_array_start() call that contains the packed data from MPI communication. In thus function, it is combined at the corresponding entries described by the ghost relations according to vector_operation. |

| ghost_array | The array of ghost data that is sent to a remote owner of the respective index in a vector. Its size must either be n_ghost_indices() or equal the number of ghost indices in the larger index set that was given as second argument to set_ghost_indices(). This function will set all data entries behind ghost_array to zero for the implementation-dependent cases when it was not already done in the import_from_ghosted_array_start() call. |

| locally_owned_storage | The array of data where the resulting data sent by remote processes to the calling process will be accumulated into. |

| requests | The list of MPI requests for the ongoing non-blocking communication that have been initiated in the import_to_ghosted_array_finish() call. This must be the same array as passed to that function, otherwise MPI will likely throw an error. |

This functionality is used in LinearAlgebra::distributed::Vector::compress().

| std::size_t Utilities::MPI::Partitioner::memory_consumption | ( | ) | const |

Compute the memory consumption of this structure.

Definition at line 463 of file partitioner.cc.

|

private |

The global size of the vector over all processors

Definition at line 552 of file partitioner.h.

|

private |

The range of the vector that is stored locally.

Definition at line 557 of file partitioner.h.

|

private |

The range of the vector that is stored locally. Extracted from locally_owned_range for performance reasons.

Definition at line 563 of file partitioner.h.

|

private |

The set of indices to which we need to have read access but that are not locally owned

Definition at line 569 of file partitioner.h.

|

private |

A variable caching the number of ghost indices. It would be expensive to use ghost_indices.n_elements() to compute this.

Definition at line 575 of file partitioner.h.

|

private |

An array that contains information which processors my ghost indices belong to and how many those indices are

Definition at line 581 of file partitioner.h.

|

private |

The set of (local) indices that we are importing during compress(), i.e., others' ghosts that belong to the local range. Similar structure as in an IndexSet, but tailored to be iterated over, and some indices may be duplicates.

Definition at line 589 of file partitioner.h.

|

private |

A variable caching the number of ghost indices. It would be expensive to compute it by iterating over the import indices and accumulate them.

Definition at line 595 of file partitioner.h.

|

private |

The set of processors and length of data field which send us their ghost data

Definition at line 601 of file partitioner.h.

|

private |

An array that caches the number of chunks in the import indices per MPI rank. The length is import_indices_data.size()+1.

Definition at line 607 of file partitioner.h.

|

private |

A variable caching the number of ghost indices in a larger set of indices given by the optional argument to set_ghost_indices().

Definition at line 613 of file partitioner.h.

|

private |

An array that caches the number of chunks in the import indices per MPI rank. The length is ghost_indices_subset_data.size()+1.

Definition at line 619 of file partitioner.h.

|

private |

The set of indices that appear for an IndexSet that is a subset of a larger set. Similar structure as in an IndexSet within all ghost indices, but tailored to be iterated over.

Definition at line 626 of file partitioner.h.

|

private |

The ID of the current processor in the MPI network

Definition at line 631 of file partitioner.h.

|

private |

The total number of processors active in the problem

Definition at line 636 of file partitioner.h.

|

private |

The MPI communicator involved in the problem

Definition at line 641 of file partitioner.h.

|

private |

A variable storing whether the ghost indices have been explicitly set.

Definition at line 646 of file partitioner.h.

1.8.14

1.8.14